I have always been intrigued by the fact that although web site usability is proven to be an essential element in web design and development, there still exist an abundance of web sites with poor usability. This paradox is the result of limitations which I have written about in an earlier article on this blog.

If these limitations can be overcome, then usability will become a more mainstream procedure – something which will result in more usable web sites

This article contains an overview of the research that I carried out prior to developing a framework whose objective is to address this paradox. I have published most of it in a research paper in the International Journal of Human Computer Interaction (Vol 2. Issue 1. pp.10-30). I have also presented my findings at the DotNetNuke Web Connections Conference 2011 which was held at the University of Hamburg.

The need for Automated Web Site Usability Evaluation

Usability Evaluation (UE) is the process of measuring usability and recognizing explicit usability problems [1]. Its main goal is to identify the main issues in the user interface that may lead to human error, terminate the user interaction with the system and cause user frustration. This article will discuss how automating this process can contribute towards mainstreaming web site usability.

Automated Usability Evaluation provides a number of advantages and overcomes the limitations of its non-automated counterpart. Such advantages include:

- Reduction of the costs of usability evaluation: It is a widely accepted fact that usability evaluation is both a time consuming and expensive process [2] [3]. Through automation, the evaluation can be done more quickly and hence more cheaply.

- Overcoming inconsistency in the usability problems that are identified: By removing the human element, automation removes the inconsistencies in the usability problems that are detected as well as any misinterpretations and wrong application of usability guidelines. This overcomes the human element limitations reported by some researchers [4] [5] [6] [7].

- Prediction of the time and costs of errors across a whole design: Since the automated evaluation tools perform usability evaluation methodically, in contrast to evaluation methods carried out by humans, they are more consistent and cover a wider area in their evaluation and thus, one can better predict the time and cost required to repair usability errors that are identified.

- Reduction of the need to engage usability experts to carry out the usability evaluation: It is generally agreed that usability experts are a scarce resource [4] [8] [9]. Therefore, the use of such tools will be of assistance to designers who do not have such expert skills.

- Increase in the coverage of the usability aspects that are evaluated: Automation overcomes commercial constraints such as time, cost and resources, which typically limit the depth of evaluation [3]. Automated evaluation software such as that which generates possible usage traces, enables evaluators to direct their effort towards aspects of the interface that may easily be overlooked.

- Enabling evaluation between different potential designs: Once again, commercial constraints limit the evaluation of one design or a group of features [10]. Automated evaluation software, in particular that which provides automated analysis provides designers with an environment where alternative designs can be evaluated.

- Enabling evaluation in various stages of the design process: This contrasts with non-automated usability evaluation methods such as user testing, whereby the interface or a prototype needs to be developed before usability evaluation can be carried out. This brings with it the advantage that an interface can be evaluated and any usability issues identified and resolved early, thus saving time and costs that would be incurred should it be addressed at a later stage.

- Enabling remote evaluation: If the automated usability evaluation tool is located on-line, then evaluation can be done remotely, thus overcoming the high costs associated with logistics.

But first, a word of caution

Despite the advantages that it provides to usability evaluation, automated usability evaluation tools should only be used in conjunction with standard usability evaluation techniques. In fact, such tools should always be used with caution and one should never completely rely on their results alone since there is little evidence supporting their effectiveness apart from the fact that there is no proof that the usage of such tools makes web sites more usable than those where these tools were not used.

It has also been observed that despite advances in technology, automation has never been effective enough to enable a tool or a user agent to behave like a human and display human attributes such as common sense [11] [12]. Past attempts at this have always resulted in tools that were too specific and application related, very costly, and over-simplified.

However, despite these limitations and the fact that automated usability evaluation is regarded as unexplored territory, it looks promising, especially when it is used for the usability evaluation of large web sites, when used for the interpretation of guidelines (especially for Heuristic Evaluation where guideline interpretation is required) and also to complement traditional user testing techniques.

The Specifications of my proposed solution

Through my experience and the research I carried out, I was able to extract a number of important conclusions. These conclusions justify the need for a solution to address the limitations that are preventing web site usability evaluation from becoming a mainstream procedure within web development. Additionally, they also outline the specifications that the aforementioned solution should have. In fact, the solution I set out to develop needed to:

- Fully automate the usability evaluation process: Based on the classification presented by Ivory and Hearst [10], any automated evaluation tool must automatically capture, analyse and criticise. Thus, these three functionalities need to be present in my proposed solution.

- Be specifically developed to evaluate usability: Tools for accessibility analysis and web usage analysis are not optimal tools through which one can evaluate the usability of a web site. This is why I set to develop a tool with the aim of using it specifically for usability evaluation.

- Evaluate the usability of a web site using the inspection method: Research shows that Heuristic Evaluation, (which is a usability inspection method that references usability guidelines) manages to detect the majority of global usability issues including the ones that are hardest to find [3] [13] [14]. In this regard, the proposed tool will evaluate the usability of a web site through the inspection method by referencing research-based web site usability guidelines.

- Evaluation based on conformance to standards: Based on the classification of automated usability evaluation tools by Chi et al. [15] the proposed tool will be a tool that makes use of conformance to standards. During my research I have not encountered such tool – thus further justifying the need for me to develop it.

- Automatically reference research-based usability guidelines: Web site usability guidelines are the optimal way in which one can understand web site usability [1] [16]. Moreover, studies indicate that adherence to guidelines contributes towards improving the usability of a web site [3] [10] [17].

- Be flexible: The proposed solution should allow the evaluator to add, modify or delete the guidelines that it references without any programming. Thus, the guidelines need to be separate from the evaluation logic. Additionally, the solution should be flexible enough to enable evaluation during various stages of the design and development process.

- Be web based: The proposed solution will be web based so as to overcome the high costs associated with logistics. This would also make the solution more easily available and at a lower cost (if any), hence contributing towards mainstreaming web site usability.

Conclusion

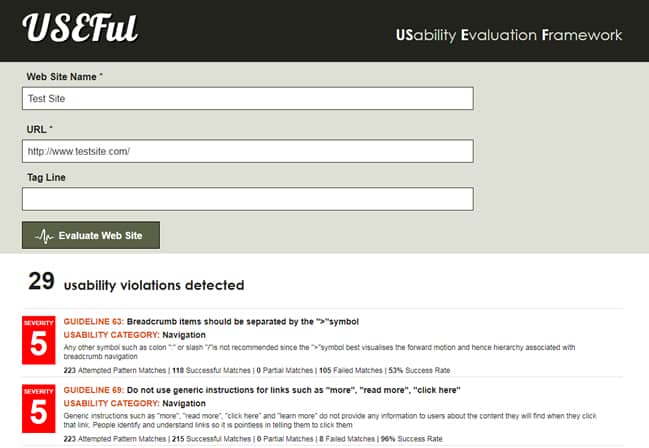

Based on the research presented in this article I proceeded to develop USEFul (USability Evaluation Framework) – a framework whose objective is to automate web site usability evaluation. Through this automation it can be easier to integrate usability evaluation within the web development process and hence mainstream web site usability. I have written about the USEFul framework in another post on this blog. Additionally, if you are interested in its technical aspect, and see some of the results we managed to attain with it, I recommend downloading my research paper (pp 10-30).

References

- Dix, A., Finlay, J., Abowd, G.D. & Beale, R., 2004. Human Computer Interaction. 3rd ed. Essex, England: Pearson Education Ltd.

- Beirekdar, A., Vanderdonckt, J. & Noirhomme-Fraiture, M., 2002. A framework and a language for usability automatic evaluation of web sites by static analysis of HTML source code. In 4th International Conference on Computer-Aided Design of User Interfaces., 2002.

- Tobar, L.M., Latorre Andrés, P.M. & Lafuente Lapena, E., 2008. WebA: a tool for the assistance in design and evaluation of websites. Journal of Universal Computer Science, pp.1496-512.

- Beirekdar, A., Vanderdonckt, J. & Noirhomme-Fraiture, M., 2002. KWARESMI – knowledge-based web automated evaluation tool with reconfigurable guidelines optimization. In Proc. 9th International Workshop on Design, Specification, and Verification of Interactive Systems DSV-IS., 2002.

- Beirekdar, A. et al., 2005. Flexible reporting for automated usability and accessibility evaluation of web sites. INTERACT, pp.281-94.

- Borges, J.A., Morales, I. & Rodríguez, N.J., 1996. Guidelines for designing usable world wide web pages. In Proc. Conference on Human Factors in Computing Systems: common ground. Vancouver, British Columbia, Canada, 1996.

- Burmester, M. & Machate, J., 2003. Creative design of interactive products and use of usability guidelines – a contradiction? In Jacko, J., Stephanidis, C. & Harris, D. Human-computer interaction: theory and practice. Mahwah, NJ, United States: Lawrence Erlbaum Associates Inc. pp.43-46.

- Montero, F., Gonzáles, P., Lozano, M. & Vanderdonckt, J., 2005. Quality models for automated evaluation of web sites usability and accessibility. In International COST294 Workshop on User Interface Quality Model. Rome, Italy, 2005.

- Vanderdonckt, J., Beirekdar, A. & Noirhomme-Fraiture, M., 2004. Automated evaluation of web usability and accessibility by guideline review. Lecture Notes in Computer Science, pp.17-30.

- Ivory, M.Y. & Hearst, M.A., 2001. The state of the art in automating usability evaluation of user interface. ACM Computing Surveys (CSUR), December. pp.470-516.

- Norman, K. & Panizzi, E., 2006. Levels of automation and user paricipation in usability testing. Interacting with Computers, March. pp.246-64.

- Tiedtke, T., Märtin, C. & Gerth, N., 2002. AWUSA – a tool for automated website usability analysis. In PreProceedings of the 9th International Workshop on Design, Specification, and Verification of Interactive Systems DSV-IS’2002., 2002.

- Jeffries, R., Miller, J., Wharton, C. & Uyeda, K.M., 1991. User interface evaluation in the real world: a comparison of four techniques. In Proc. ACM Computer Human Interaction CHI’91 Conference. New Orleans, LA, United States, 1991.

- Otaiza, R., Rusu, C. & Roncagliolo, S., 2010. Evaluating the usability of transactional web sites. In Third International Conference on Advances in Computer-Human Interactions. Saint Maarten, Netherlands, Antilles, 2010.

- Chi, E.H. et al., 2003. The Bloodhound project: automating discovery of web usability issues using the InfoScent™ simulator. In Proc. ACM CHI 03 Conf. Ft.Lauderdale, Florida, United States, 2003.

- Fraternali, P. & Tisi, M., 2008. Identifying cultural markers for web application design targeted to a multi-cultural audience. In 8th International Conference on Web Engineering ICWE’08. Yorktown, New Jersey, United States, 2008.

- Comber, T., 1995. Building usable web pages: an HCI perspective. In Proc. Australian Conference on the Web AusWeb’95. Ballina, Australia, 1995.

Want to learn more?

If you’d like to…

- get an industry-recognized Course Certificate in Usability Testing

- advance your career

- learn all the details of Usability Testing

- get easy-to-use templates

- learn how to properly quantify the usability of a system/service/product/app/etc

- learn how to communicate the result to your management

… then consider taking the online course Conducting Usability Testing.

If, on the other hand, you want to brush up on the basics of UX and Usability, then consider to take the online course on User Experience. Good luck on your learning journey!

(Lead image: Depositphotos)